How to Select the Right GenAI Adoption Strategy

Choosing the right approach to integrate GenAI technologies is crucial in shaping a comprehensive GenAI strategy. As we move into an era where highly advanced AI capabilities are readily available, planning for their effective use becomes essential. As summarised in this GenAI Adoption Framework by Medium, organisations can either adopt off-the-shelf GenAI functionalities within their applications or access them via APIs for convenience.

On the other hand, more sophisticated strategies tailored to an organisation’s specific data and objectives can greatly enhance benefits, albeit with increased complexity in customisation.

Deciding between widespread implementation of basic tools or investing in deeply integrated, advanced capabilities is a pivotal strategic decision. Let’s take a more detailed look at potential options for Enterprises.

Utilising GenAI Applications Directly

Organisations or individuals can directly leverage applications equipped with built-in generative AI capabilities. The current market presents a variety of such applications, like ChatGPT, which provide significant value with minimal upfront effort. This method enables swift deployment and immediate realisation of benefits. However, exclusively relying on generic AI tools may miss out on the specific nuances and insights that can be derived from an organization's distinct data.

Incorporating GenAI APIs

Enterprises can develop their own applications by integrating generative AI using foundational model APIs. Leading proprietary generative AI models such as GPT-3, GPT-4, PaLM 2, and others are available for deployment through cloud-based APIs. This approach enables customization of AI capabilities to meet specific business requirements and goals. Alternatively, leveraging open-source generative AI models presents another option. Although this may involve more setup and customization effort, it offers increased flexibility and control over the AI systems.

Deployment of Retrieval Augmented Generation (RAG)

Retrieval Augmented Generation (RAG) is a method through which an enterprise can enhance the functionality of a foundational generative AI model by integrating external data, often sourced from the enterprise's internal repositories, into the AI's prompts. This integration notably enhances the precision and appropriateness of the model's outputs for tasks within specific domains, particularly those requiring specialised knowledge or contextual understanding.

Specialisation via Fine-Tuning

Fine-tuning is an advanced method for customising AI models. It involves using a large, pre-trained foundational model as a base that is then further trained on a new, organization-specific dataset. This approach integrates additional domain knowledge or enhances the model's capabilities for specific tasks, typically resulting in bespoke models finely tuned to meet the organisation’s precise requirements.

Creation of Custom Foundation Models

Enterprises can choose to develop their own foundational models completely from the ground up, carefully crafting them to fit their distinct datasets and business sectors. This method epitomises the highest level of customisation in AI development, enabling organisations to design models that closely match their particular operational requirements and industry intricacies.

The next decision involves whether to Buy or Build and Whether to Keep it Open or Closed. Relying heavily on a general-purpose AI platform will not likely give a competitive edge. Increasingly, Enterprises are realising the value of their own in-house data to train AI models. Hence, some CIOs are taking measures to restrict the use of external AI platforms within their companies. For example, Samsung prohibited the use of ChatGPT after employees used it to work on commercially sensitive code.

The other challenge is the required training data to feed large-scale AI models. OpenAI reportedly used 10,000 GPUs to train ChatGPT. Therefore, building large-scale AI models is only feasible for the most well-resourced technology firms in the current scenario.

Although light may be at the end of the tunnel, smaller models may offer a viable alternative. Generative models have been fine-tuned for domains that require less data, as shown by models like BioBERT for biomedical content, LegalBERT for legal content, and CamemBERT for French text. For specific business use cases, organisations may opt to sacrifice broad knowledge for specificity in their business area. Companies will extend and customise these models with their own data and integrate them into their own applications that are relevant to their business. Smaller open-source models, such as Meta's LLaMA, could rival the performance of large models and allow practitioners to innovate, share, and collaborate.

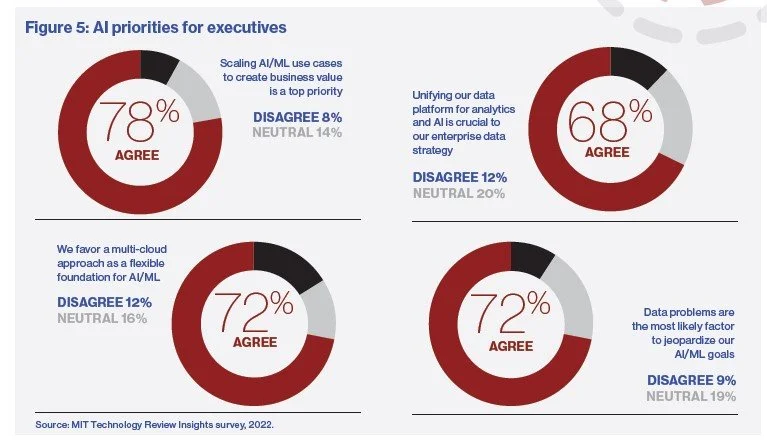

Chief Information Officers and technical leaders must act decisively by embracing generative AI to take advantage of its opportunities and avoid losing competitive ground. They must also make strategic decisions about data infrastructure, model ownership, workforce structure, and AI governance that will have long-term impacts. AI is no longer limited to pilot projects and isolated successes but is becoming a vital capability integrated into the fabric of organisational workflows.

Generative AI's new ability to uncover and utilise previously hidden data will drive remarkable organisational advances. Organisations are adopting next-generation data infrastructures, such as data lakehouses, to democratise access to data and analytics, enhance security, and combine low-cost storage with high-performance querying. As outlined above, some organisations are using open-source technology to build their own LLMs, maximising the use of their data and protecting their intellectual property. Obviously, for AI to progress effectively, it must be underpinned by a unified and consistent governance framework.